Retrieval practice during lessons: the ups & downs of my experience

For years, I toiled with quiz questions in my biology courses (AKA core questions). I used quiz questions as starter quizzes, to summarise key points of an explanation, as homework, and for cover work. It didn’t work out as I had thought.

I warned about their potential problems long ago; they can fragment course content into meaningless chunks. Rob Coe also expressed his doubts about retrieval practice in the classroom.

Nevertheless, I made real efforts to make it work and spent considerable time meditating on the best design for quiz questions. I dedicated a whole section to it in Difference Maker. There, I argued that the questions should be:

- Revision tools – they should provoke students to “re-call”, not to learn in the first place.

- Unambiguous – students are expected to come to the same answer, so questions shouldn’t permit varied answers that can lead to confusion.

- Short but not fragmented – the answers should be short enough to be memorable, but not so short they break up relationships.

- Enable thinking – there should be variation in question type to avoid developing an ability to re-call alone.

In this post, I’ll share the data I collected on retrieval practice quizzes and what I’ve learnt from them.

All of the data I’ll present comes from my IB Biology courses (16-18-year-olds) across three cohorts. The content load of these courses is heavy. As an incentive for students to stay on top of it, I gave starter quizzes (testing previously covered content) and collected weekly data. The students had access to the questions and answers. Why did I start collecting the data? Because it was easy to collect, and I thought it could reveal something interesting.

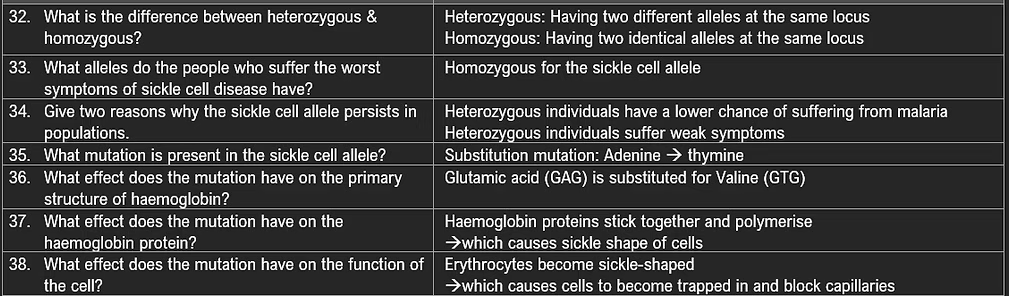

The questions were randomly chosen (mainly because I didn’t have the time to choose them). The total number of questions is high (almost 1400 for the entire course), and they were designed around the course specification. An example is shown below.

The data below aren’t from tightly controlled experiments. There were no control groups, and there was natural variation among students (including study effort). Another issue people may mention is the method of the quizzes. However, although teachers may differ in how they implement quizzes, the desired outcome is the same:that students remember the answers.

The current trend of using quizzes in England (as I perceive it from social media) is based on the assumption that if students remember these facts, they’ll know more. This frees up working memory to understand more, be more successful, and, therefore, be more motivated. Did knowing the answers to the quizzes improve their performance on exams?

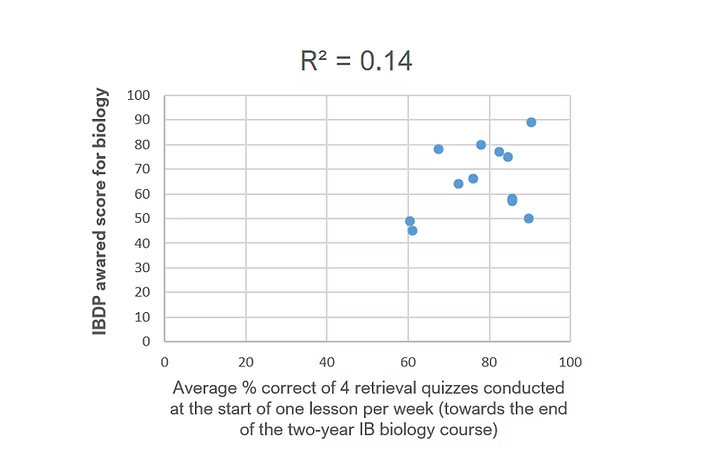

I began collecting the data towards the end of a two-year IB biology course, so for this first set of data, the sample size is small − just four weeks, and therefore four quizzes. To begin with, I collected the data to follow my students’ progress. Below is a scatter plot of the average percentage correct on the quizzes versus their official IB Biology scores.

The correlation is very low, but so is the sample size. By the end of the course, the content already covered was high, and the number of questions they were asked was low.

But we can see some interesting trends. They were students who scored quite differently on the quizzes − 60% correct, compared to 85-90% − but got a similar final score on the course (around 50). They were also students who scored highly on the quizzes (above 80% correct), yet their final scores ranged from 50 to 90.

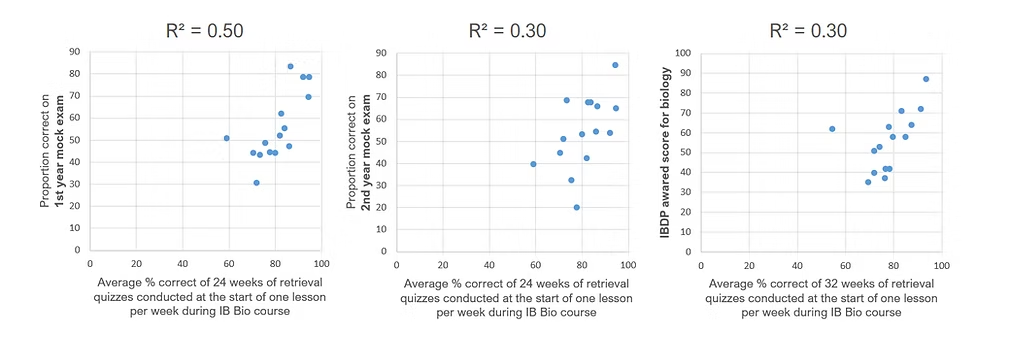

The results interested me enough to continue collecting data on a younger cohort. The next set of data comes from a sample size of 24 and 32 quizzes (the 32 includes the previous 24) that occurred over the first and second year of their IB course, respectively.

In the scatter graphs below, there are three comparisons. The overall average % correct on the quizzes is compared with the first-year mock exam (May), the second-year mock exam (Nov), and the final score from exams in May. Between the first mock exam and the second, the students carried out coursework so there were no quizzes. Extra quizzes were carried out after the second mock exam, hence the different sample sizes.

Now we find a higher correlation between knowing the answers to the quiz questions and their exam results. The strongest relationship is found in the final graph. One student bucks the trend, but in general, there appears to be a positive relationship between the two.

And this makes sense, because in IB Biology exams, you do need to answer with the details and technical vocabulary to succeed, and knowing extra details should allow students to decipher more complex questions.

However, what separates a top- and bottom-performing IB official score is only 15-20 percentage points on the quizzes. The worst-performing students on the quizzes (excluding the outlier) still answered over 70% of the questions correctly despite the heavy content load. Those students knew a lot of those answers. So there is much more involved than simply knowing the answers to quiz questions. This is clearer in the next cohort.

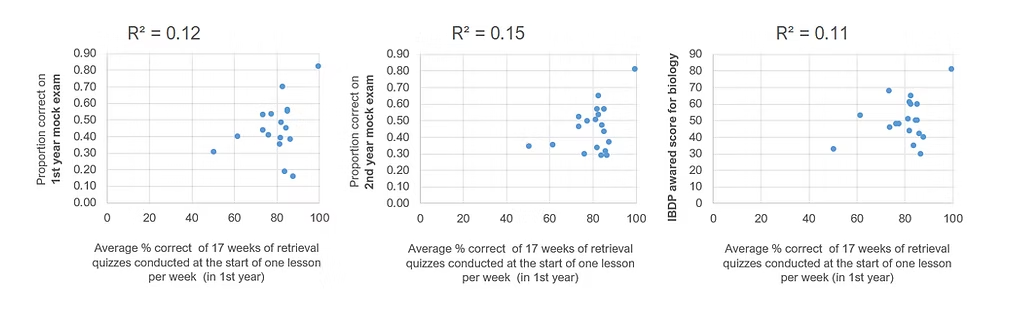

With this class, the sample size is smaller but still includes 17 quizzes over 17 weeks. All of the quizzes occurred in the first year of teaching. I stopped collecting data because I stopped the quizzes altogether. I’ll explain why in a minute. Here are the scatter graphs:

The correlations this time are much lower, only hinting at a positive relationship. By the first mock exam, most students got most of the quiz questions correct (around 80%). But whether or not they knew the answers to the questions told me little about their ability on exams. In the first mock exam, some students scored an average of above 80% on the quizzes, yet below 20 on the exam. This is despite the questions being designed around the IB course, its specification and its exam mark schemes.

Another interesting observation is that the weaker students improved in the second year despite the quizzes ending in the first year. The general pattern persists in the official exam results, showing some validity in the mock exams I designed (the R2 correlation for the May mock exam with the official result was 0.68, and 0.69 for the second mock exam).

So what can we make of this data? Firstly, across the three cohorts, knowing the answers to the quiz questions did not seem to be a dominating major factor in how well students succeeded in showing their understanding on exams. While for one cohort the correlation was more clearly positive, it still showed that knowing details is important, but not enough.

Why did I stop the quizzes?

There is another factor I think is often missed in discussions on the importance of retrieval practice and knowledge recall. This is our students’ conception of learning – and of understanding – in our subject. This is because our students are adaptive agents and not just consumers of our explanations and quizzes.

While I collected the quantitative data, I also sought qualitative data on how students felt about their learning and the course. This was a constant endeavour through conversation and periodic emails. I found that the quizzes, despite the successes, were not motivating my students.

They were generally motivated to get good grades, but the quizzes seemed to negatively affect their view of biology as a subject. The students were more inclined to see biology as rote learning, and they didn’t like that. And this ultimately affected their ways of learning; how they organised and invested their attention in lessons.

This shows why we have to assess the effectiveness of pedagogical assumptions. We need to probe the system and reflect on what emerges from the interactions between teacher, students, curriculum, and context. It’s why I encourage other teachers to keep data to check their assumptions − whether quantitative or qualitative.

While there is supporting evidence for retrieval practice in labs, we also need to check how quizzes interact in the real ecologies of the classroom. For example, when the EEF conducted a large classroom experiment comparing retrieval practice starters to discussion starters, they found it made no difference.

I stopped the quizzes in my classroom, but I didn’t throw out the baby with the water. The quiz questions remain within the courses, just in a more nuanced niche, due to the lessons I’ve learnt.

I write them as a planning tool to think about what I’m going to teach. And I continue to encourage them as a revision tool alongside other techniques that focus on generating understanding.

This is how I prefer it, because my priority is establishing a culture of learning that helps students understand what understanding is in my subject. Rather than employing study techniques in the classroom, our lessons consist of conversation that coordinates meaning. See how, in Teaching Meaning.